Can AI generate geographically accurate, client-ready hero images for Australian businesses? We ran 13 experiments across 100+ images to find out.

Real Jezweb business types. Each image uses search context references (location + industry) and a detailed narrative prompt. Ship-ready hero images.

11 business types across 5 categories, all generated with search references + narrative prompts. The pipeline works for virtually any industry.

Using the streetview Python library to download actual 360-degree panoramas (16,384 x 8,192 pixels) from Google Maps user photos. Cropped to the relevant viewing direction, these provide the best reference quality we've seen.

Instead of relying solely on Google Images, we pull references from 4 sources in parallel: Google Images (with improved negative keyword queries), Google Maps Places API (real user photos), Wikimedia Commons, and Streetview panoramas. AI curation then picks the best 3-4 refs based on location accuracy, normal state (no events/decorations), composition value, and technical quality.

Full sets of website images generated for a single business. The wedding suite uses scraped client photos as style references (reference-guided). The cafe suite uses only prompts (cold-start). Both produce cohesive, professional results.

Same location, different compositions. The model generates distinct hero styles from the same reference photos. All 16:9 with text overlay zones.

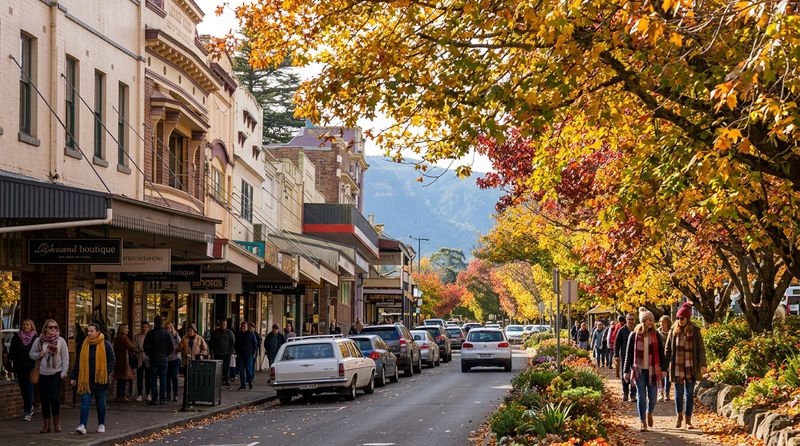

The model produces surprisingly accurate Australian scenes, even when given bad references or no references at all.

What we learned across 18 experiments and 130+ images.

| Capability | Status | Best Approach |

|---|---|---|

| Stock photo replacement | Ready | Detailed prompt + camera specs + AU context |

| Reference-inspired generation | Ready | Feed stock photo as reference + detailed prompt |

| Famous landmarks | Ready | Plain prompt (training data sufficient) |

| Regional landmarks | Ready | Search-based reference pipeline (3-5 photos) |

| Client hero images | Ready | Two-query search (location + industry) + narrative |

| Hero composition (text overlay) | Ready | "Subject on right 60%, left 40% clear sky for text" |

| 16:9 hero images | Ready | imageConfig: { aspectRatio: "16:9" } |

| Generate-until-happy workflow | Ready | Temperature 0.8, run 3x, pick best (~$0.15) |

| AI reference curation | Ready | Gemini Flash ranks 8 candidates, picks best 3-4 for generation |

| QA verification loop | Ready | Vision model catches errors; 2 passes dramatically improves accuracy |

| Panorama references | Ready | 360-degree user panoramas provide best reference quality |

| Trades & services | Ready | Solar, plumbing, landscaping, hair, fitness all client-ready |

| Multi-source references | Ready | 4 sources + AI curation rejects wrong locations, events, night shots; resilient when one source fails |

| Full image library suite | Ready | 8 cohesive images per business from scraped reference photos; cold-start also viable |

| Website photo scraping | Ready | WordPress sites richest (50+ images); categorised by hero/work/team/logo automatically |

| Instagram scraping (Apify) | Ready | 1080px business photos at ~$0.003/result; Facebook blocked without auth |

| Cold-start generation | Ready | 5-part framework + shared prompt anchors create cohesion without references |

| Curation reliability | Fixed | Increase maxOutputTokens to 4096; 7-step cascade parser as safety net; 5/5 success |

| Street-level accuracy | Limited | Coordinate targeting unreliable; panorama refs or curated search better |

| Icons / transparent assets | Use GPT | Gemini can't do transparency |

| Text rendering on images | Use GPT | GPT Image 1.5 better at text |

The validated workflow for generating client-ready image libraries.

Client website + Instagram photos. WordPress sites yield 50+ images. Auto-categorise (hero, team, work, logo).

SerpAPI + Google Maps Places for location context. 6-8 candidates per query. Fall back to cold-start if no scrape data.

Gemini Flash ranks candidates. 7-step cascade JSON parser (maxOutputTokens 4096). Top 3-4 selected as refs.

8-image suite: hero, interior, team, product, detail, food, event, exterior. Refs anchor palette + style.

Vision model checks output. If issues found, regenerate with feedback. Full suite ~$0.40, ~3 minutes.

All 18 experiments in chronological order.

| # | Experiment | Images | Key Finding |

|---|---|---|---|

| 1 | Search Grounding Modes | 15 | Image grounding helps regional landmarks, not generic scenes |

| 2 | Multi-Model Benchmark | 12 | Gemini best for scenes, GPT for icons/text |

| 3 | Reference-Inspired Generation | 15 | Detailed prompt + reference beats grounding for generic scenes |

| 4 | Regional Location Accuracy | 16 | Grounding only activates for 3/8 locations; training data surprisingly good |

| 5 | Multi-Reference Pipeline | 9 | Multi-ref (3+) is the accuracy winner; we control the references |

| 6 | Street View + High-Ref-Count | 7 | Street View coordinates fragile; model ignores bad refs gracefully |

| 7 | Hero Consistency | 9 | 16:9 hero images with text overlay zones work reliably |

| 8 | Same-Prompt Repeatability | 3 | Consistent location accuracy; viewpoint varies ~20-30 degrees |

| 9 | Client Scenarios | 4 | All 4 business types genuinely ship-ready |

| 10 | Smart Reference Selection + QA Loop | 6 | AI curation + QA verification loop dramatically improves accuracy |

| 10b | Streetview Panorama References | 3 | 360-degree panoramas provide best reference quality; solved Tomaree problem |

| 12 | Expanded Business Types | 11 | 11/12 business types client-ready; trades images exceptionally strong |

| 13 | Multi-Source Reference Selection | 3 | 4 sources (Google Images, Maps Places, Wikimedia, Streetview); AI curation rejects wrong locations, events, night shots |

| 14 | DataForSEO vs SerpAPI Comparison | 0 | SerpAPI 23x faster (0.11s vs 2.48s); identical image quality; both scrape Google Images |

| 15 | Website Image Scraping | 53 | WordPress sites yield 50+ images; simple HTML sites nearly empty; auto-categorisation works |

| 16 | Instagram Scraping (Apify) | 21 | Instagram works at ~$0.003/result; Facebook blocked without auth; CDN URLs temporary |

| 17 | Wedding Venue Image Library (8 images) | 8 | Reference-guided from scraped photos; all 8 share burgundy/blush palette; style-consistent |

| 18 | Cafe/Bakery Cold-Start Suite (6 images) | 6 | No references needed; 5-part framework + shared anchors create cohesion; unmistakably Australian |